You ran your first pulse survey. The responses are in. You open the dashboard, look at the numbers, and think: now what?

You're not alone. According to industry research, 58% of companies collect employee engagement data and then do nothing meaningful with it. The survey goes out, the results sit in a tab or a dashboard nobody reopens, and three months later someone suggests running another one. Your team notices. They noticed the first time.

What you do after a pulse survey matters more than the survey itself. A pulse survey results action plan doesn't need to be complicated -- it needs to be visible. This article walks through five concrete steps for turning survey data into action your team can actually see, plus the mistakes that quietly kill the whole process.

The feedback loop that most teams break

Every pulse survey creates an implicit promise: we asked because we care, and we'll do something with what you told us.

When that promise goes unfulfilled, something predictable happens. Response rates drop. Answers get less honest. The most engaged people -- the ones who gave thoughtful feedback the first time -- are the first to stop participating. According to Gallup, workgroups in the bottom quartile for acting on survey results saw engagement scores decrease by 3%. Not flatline. Decrease. Asking for feedback and ignoring it is worse than not asking at all.

McKinsey's research puts it bluntly: the number one driver of so-called "survey fatigue" isn't survey frequency. It's the perception that nothing will change. Culture Amp reframed this as "lack-of-action fatigue" -- and it's the right name. Your team isn't tired of answering questions. They're tired of answering questions that go nowhere.

The fix is a closed feedback loop: ask, listen, act, tell them what you did, then ask again. Most teams break this loop at step three. The five steps below are designed to make sure you don't.

Step 1: Read the data without reacting

Your first instinct when you see survey results will be wrong. Not always factually wrong, but emotionally wrong. A low score on "I feel supported by my manager" will feel personal. A 4.8 on "I enjoy working with my team" will feel like vindication. Neither reaction is useful yet.

Give yourself 24 hours before doing anything with the data. Read it, note your first reactions, and then close the dashboard. Sleep on it. The goal is to separate the signal from the emotional noise.

There are two specific traps to avoid in the first 24 hours:

- The explain-away reflex. You see a low score and immediately think, "Well, that was the week we had the server outage -- it's not representative." Maybe. But if you explain away every uncomfortable result, you'll never act on anything uncomfortable.

- The fix-everything urge. You see three problem areas and want to address all of them by Friday. This leads to vague commitments and zero follow-through. Resist it.

After 24 hours, come back with fresh eyes. The scores that still bother you are the ones worth investigating. The ones you've already forgotten probably aren't urgent.

Step 2: Identify one theme, not ten

This is the hardest step, and it's where most teams fail. Pulse survey data will surface multiple themes -- workload, communication, growth opportunities, team dynamics, manager support. Your instinct will be to address all of them. Don't.

Pick one theme. Just one. The theme that is most actionable this week, not the one that's most important in the abstract. "Workload is unsustainable" might be the biggest problem, but if solving it requires a hiring plan and budget approval, it's not the right pick for this cycle. "People don't know what's happening outside their team" might be solvable with a 15-minute weekly update.

Here's a practical filter for choosing your one theme:

- Is the score a trend or a blip? If a metric dropped this week but was stable for the past month, it might be noise. If it's been sliding for three weeks, it's a signal.

- Can you do something about it in the next two weeks? If yes, it's a candidate. If not, note it for later but don't promise action you can't deliver.

- Does it affect the whole team or one person? Pulse surveys surface team-level patterns. If you suspect a score reflects one person's situation, have a direct conversation instead.

One theme per cycle. Not because the other themes don't matter, but because one visible action builds more trust than five half-finished promises.

Step 3: Share results transparently with the team

This is where many team leads freeze. Sharing survey results feels vulnerable -- what if the scores are bad? What if people disagree with the interpretation? What if sharing low scores makes things worse?

Share them anyway. Hoarding survey data is the fastest way to kill trust in the process. If your team gave you honest feedback and never hears what the aggregate picture looks like, they'll assume the worst -- that you saw the results and decided to ignore them.

What to share:

- Overall trends. "Our satisfaction score has been steady at 4.1 for the past month, but workload scores dropped from 3.8 to 3.2 over the last three weeks."

- The theme you identified. "The clearest signal this cycle is around workload -- specifically, that it's been creeping up and people feel stretched."

- What you plan to do about it. One sentence. Specific. "I'm going to review our current project commitments this week and cut or defer at least one non-critical item."

What not to share:

- Individual scores or verbatim quotes from small teams. If your team has 12 people and someone wrote a distinctive open-ended comment, paraphrasing it protects anonymity. Share themes, not transcripts.

- Every data point. Your team doesn't need a 20-slide deck. They need a two-minute summary -- what the data says, what you're doing about it, when they'll hear back.

Where to share depends on your team's communication habits. A Slack message works. A five-minute segment in your weekly standup works. An email works. The format matters far less than the act of sharing at all.

Step 4: Commit to one action, publicly

Notice the word publicly. A commitment you make in your head doesn't count. A commitment you write in your own notebook doesn't count. The action needs to be visible to the people who gave you the feedback.

Good commitments are specific, small, and time-bound. "We're going to improve communication" is not a commitment -- it's a wish. "Starting next Monday, I'll post a five-minute weekly update covering what each team is working on and any cross-team dependencies" is a commitment.

Here's the difference in practice:

- Vague: "We'll work on improving work-life balance."

- Specific: "I'm canceling recurring meetings on Fridays that don't have a clear agenda. Starting this week."

- Vague: "We hear your concerns about career growth."

- Specific: "I'll schedule a 30-minute career conversation with each person on the team over the next three weeks."

One action. Not a task force, not a committee, not a "working group to explore options." One concrete thing that will be visibly different next week. Gallup's research shows that workgroups in the top quartile for post-survey action planning saw engagement scores increase by 10%. The action doesn't have to be big. It has to be real and it has to be visible.

Step 5: Follow up in the next survey cycle

This is the step that separates teams that build lasting trust from teams that run surveys as a checkbox exercise. When the next pulse survey comes around -- whether that's next week or next month -- you need to close the loop.

Closing the loop means three things:

- Report what you did. "Two weeks ago, I committed to canceling Friday meetings without agendas. I've cancelled three recurring meetings so far."

- Check whether the scores moved. Did the workload score improve? Did the related theme shift? If yes, say so -- your team should know their feedback led to a measurable change. If not, say that too -- "the scores haven't moved yet, so I'm going to keep this as a focus area."

- Decide whether to continue or move on. If the theme is improving, you might pick a new focus area for the next cycle. If it's stuck, dig deeper -- the action you chose might not have been the right lever.

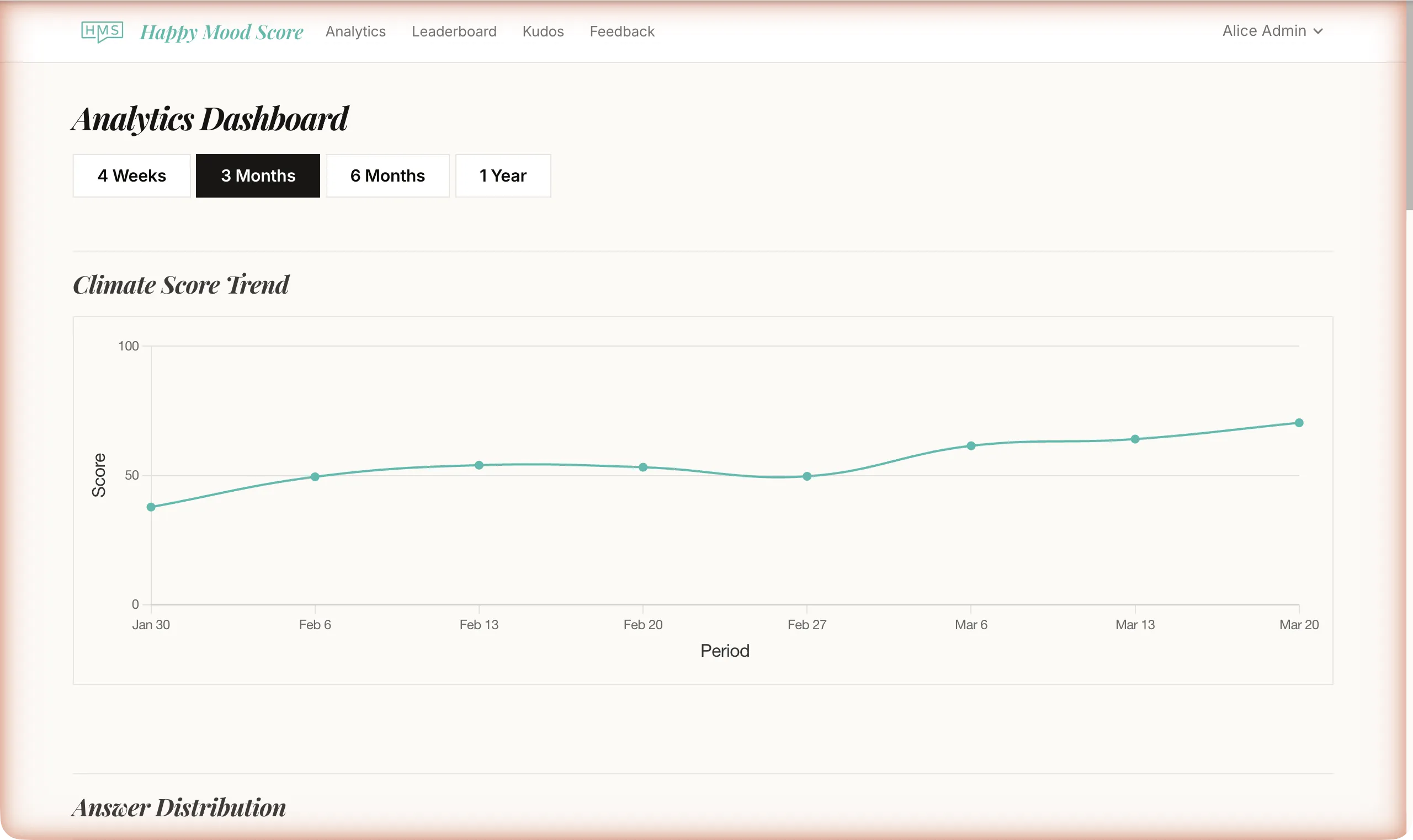

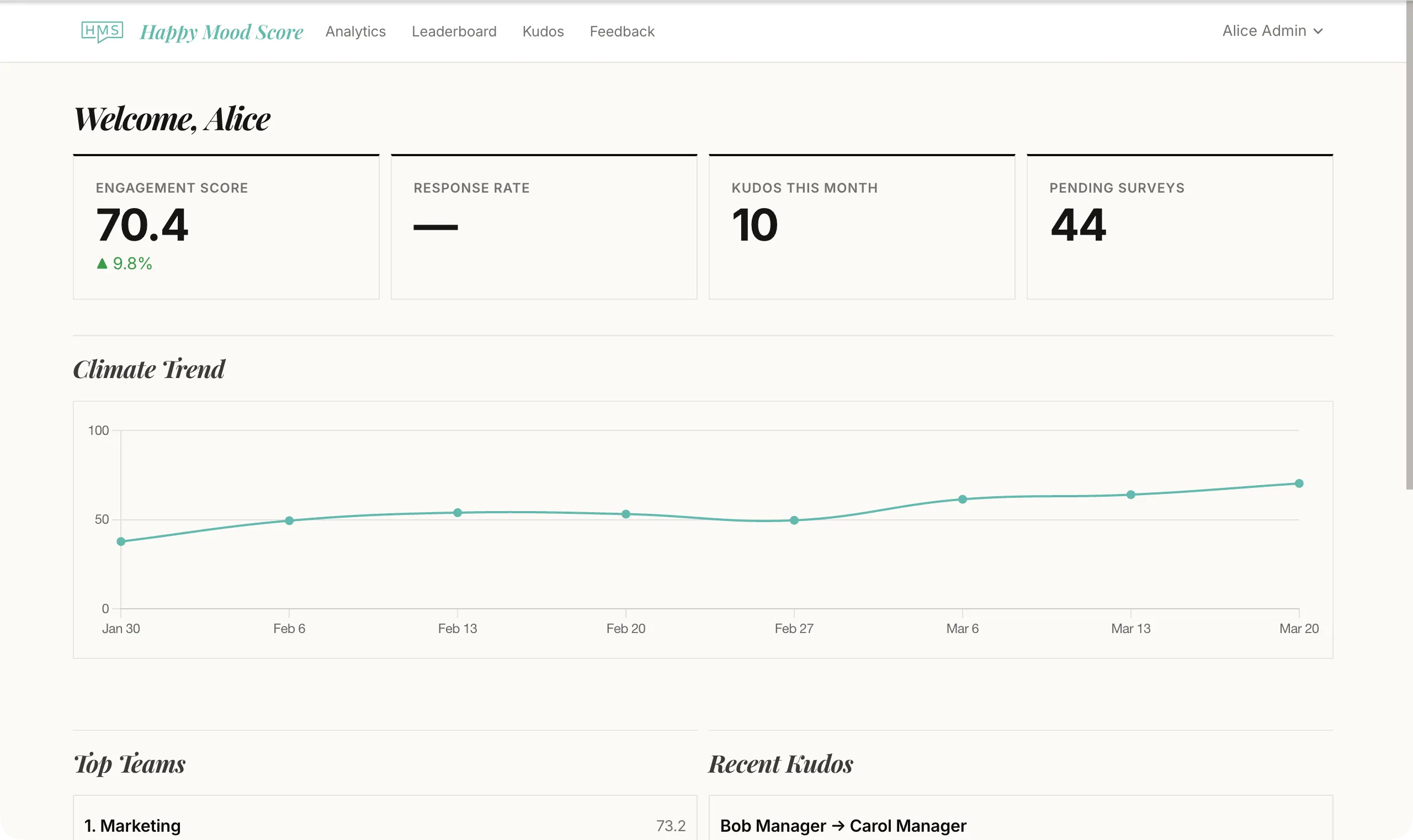

This is where trend data becomes genuinely powerful. You're not comparing this week's score to a benchmark in a report -- you're comparing it to last week, and the week before, and the week before that. You can see whether your action moved the needle, and your team can see it too.

Employees who see their feedback acted on are 1.9 times more likely to be engaged than those who don't. That's not a marginal improvement. That's nearly double. And the effect compounds: each cycle where feedback leads to visible change makes the next survey more honest, more participatory, and more useful.

What not to do: the anti-patterns that kill trust

The five steps above tell you what to do. These are the mistakes that undo the work, often without you realizing it.

The black hole. You collect feedback and nothing happens. No summary, no action, no acknowledgment. Your team fills out the next survey with lower effort, if they fill it out at all. This is the most common failure mode -- 58% of companies fall into it -- and it's the one that turns pulse surveys from a trust-building tool into a trust-eroding one.

The over-promise. You share results enthusiastically and commit to five changes at once. Three weeks later, you've delivered on one and quietly dropped the other four. Your team doesn't remember the one you did -- they remember the four you didn't. Commit to one action. Deliver it. Then commit to the next one.

The cherry-pick. You share the high scores and quietly skip the low ones. Your team will notice what's missing. If satisfaction is 4.5 but workload is 2.8, sharing only the 4.5 tells your team you're not interested in hearing what's hard. Share the uncomfortable numbers. That's where the value is.

The interrogation. A low score appears and you start trying to figure out who gave it. You cross-reference timing, team membership, recent conversations. Even if you never confront anyone, the detective work changes how you interact with people you suspect -- and they notice. This is the single fastest way to destroy survey trust. The low score is data. Use it as data. Don't use it as a clue.

The one-and-done. You act on one survey, declare the issue resolved, and never mention it again. Engagement isn't a problem you solve once. It's a practice you maintain. The feedback loop is a loop -- it works because it keeps running, not because it ran once.

Closing the loop: why visible action drives everything

The entire pulse survey process -- the questions, the anonymity, the frequency, the tool you use -- exists to support one outcome: a team that trusts you enough to tell you the truth, and a leader who does something useful with it.

That trust is built in the follow-through, not in the asking. One survey with visible follow-through builds more trust than ten surveys with perfect anonymity settings and zero action. The mechanics matter less than the habit: ask, listen, pick one thing, do it, tell them you did it, ask again.

Tools like Happy Mood Score make this easier by automating the parts that shouldn't require manual work -- tracking scores over time, showing trend lines week by week, surfacing changes automatically so you can focus on the "act" and "tell them" steps instead of rebuilding a spreadsheet every cycle. But the tool is the scaffolding. The habit is the structure.

Start this week. Look at your most recent survey results. Pick one theme. Tell your team what you found and what you're going to do about it. Do the thing. Then, next cycle, tell them what changed.

That's the whole action plan. Five steps, repeated. The survey is just the beginning.