When your team was 12 people, you could feel the energy in the room. You noticed when someone went quiet. You caught things early because you were literally sitting next to each other. Then you hired ten more. Then ten more. Now there are Slack channels nobody reads and people you only see in all-hands.

Pulse surveys are how you fix that. Not the 80-question corporate kind that HR sends once a year and nobody reads the results of. Short ones. One question a week. The kind you answer in ten seconds from your inbox and move on.

If your team is somewhere between 20 and 100 people and you're starting to lose track of how everyone's doing, this is everything you need to know to make pulse surveys actually work.

What a pulse survey is (and what it isn't)

A pulse survey is just a short, recurring check-in with your team. One to five questions, sent weekly or biweekly. You're taking a quick read on how people feel -- like checking a pulse. Quick, frequent, and designed to catch changes early.

Here's how they're different from those annual engagement surveys you've probably been subjected to at a previous job:

- They're short. One to five questions, not 50. That's the whole game. Short surveys actually get answered.

- They're frequent. Weekly or biweekly. You're watching a trend line, not staring at a single snapshot from six months ago.

- They're lightweight. No consultants. No two-week "analysis phase." You send them, people answer, you see the results. That's it.

- They're useful. Because you get fresh data every week, you can actually do something about it before the problem gets worse.

What pulse surveys aren't: they're not performance reviews, they're not a way to evaluate individuals, and they don't replace actual conversations with your teammates. Think of them as a signal. A way to know where to pay attention.

Why the annual survey thing doesn't work at a growing company

If you've ever worked at a big company, you know the drill. A 60-question survey shows up in October. You spend 25 minutes on it. The results come back in January. Someone presents a slide deck. Nothing changes. Next year, you click through it as fast as possible.

Annual surveys were designed for giant, slow-moving companies where not much changes year to year and there's a whole analytics team to crunch the numbers. That's not you.

At a growing company, they break in three specific ways:

The data's already old. Your team looks completely different than it did six months ago. The person who was frustrated in October left in December. By the time the results land, you're reading about a company that doesn't exist anymore.

Nobody wants to sit through them. Your team has stuff to build. A 30-minute survey feels like homework. Response rates tank, and now your data isn't even reliable because the only people who filled it out are the ones who had a free afternoon.

Nothing happens after. This is the big one. People take the time to share how they feel, and then... silence. No follow-up, no changes, no acknowledgment. Next year, they don't bother. Can you blame them?

Pulse surveys fix all of this. Fresh data every week. Takes a minute to fill out. And because you're asking frequently, you can actually respond to what you hear -- which is the part that makes people trust the process.

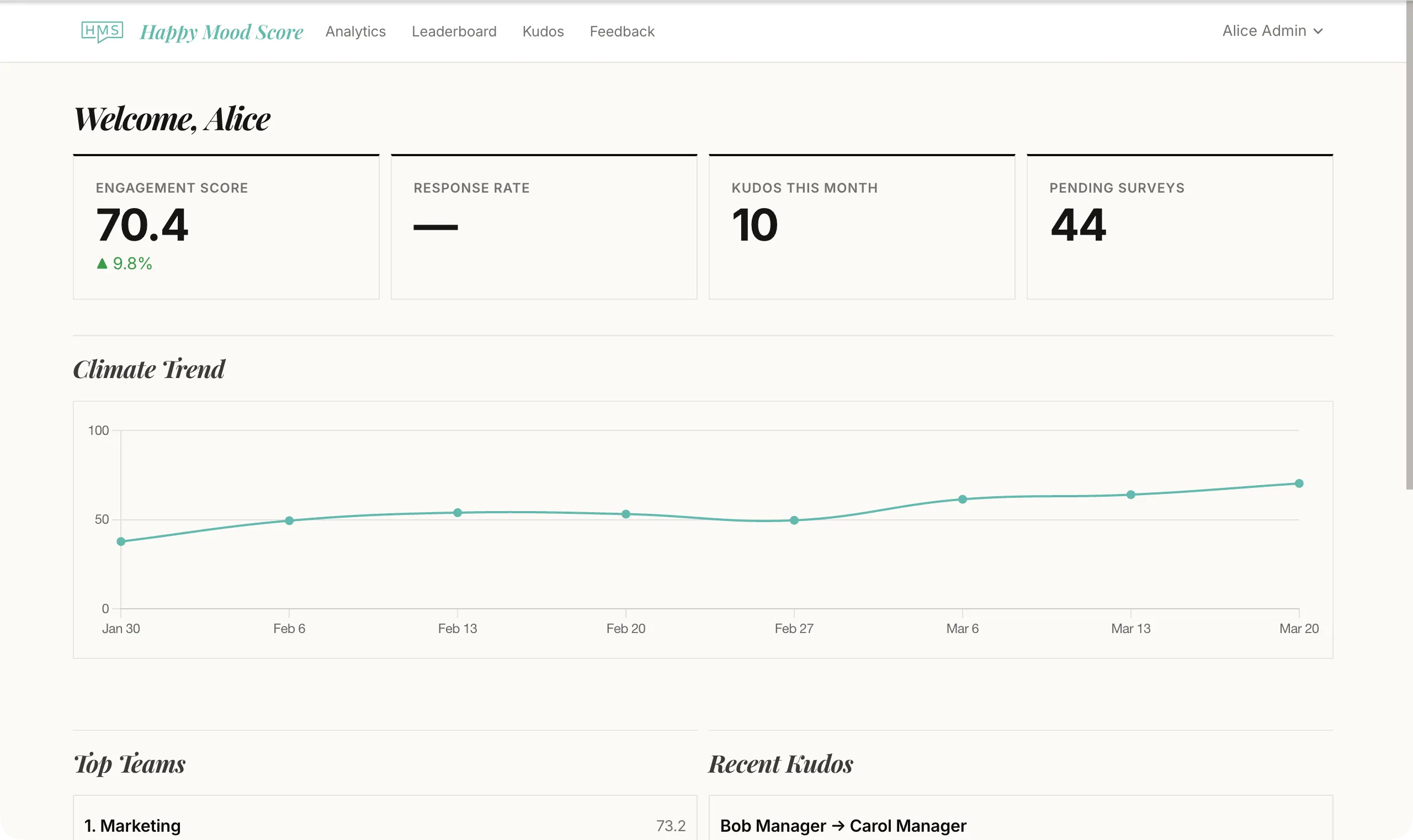

What this actually looks like, week to week

Here's what a typical week looks like so this feels concrete.

Monday morning: Everyone on the team gets an email with one question. Something like: "On a scale of 1 to 5, how supported do you feel by your manager?" You tap a number. Done. Ten seconds.

By Wednesday: Most people have answered. A couple of stragglers get a short reminder. Nothing pushy.

Thursday: Whoever runs these checks the results. Average score this week is 3.8, down from 4.1 last week. The engineering team dropped to 3.2. That's a signal.

Friday: The lead brings it up casually in standup. "Hey, support scores dipped this week -- anything going on?" Turns out the re-org left some people unclear on priorities. It gets addressed in the next sprint planning.

Next Monday: New question goes out. Next week, you'll see if the score bounces back. That's the loop.

How often should you do this?

For most teams, weekly or biweekly is the sweet spot. Weekly gives you the most current picture. Biweekly is fine if you're just starting out.

Monthly is too slow. Things move fast at a growing company. If something goes sideways in week one and you don't measure again until week five, you've missed the window to fix it.

How many questions?

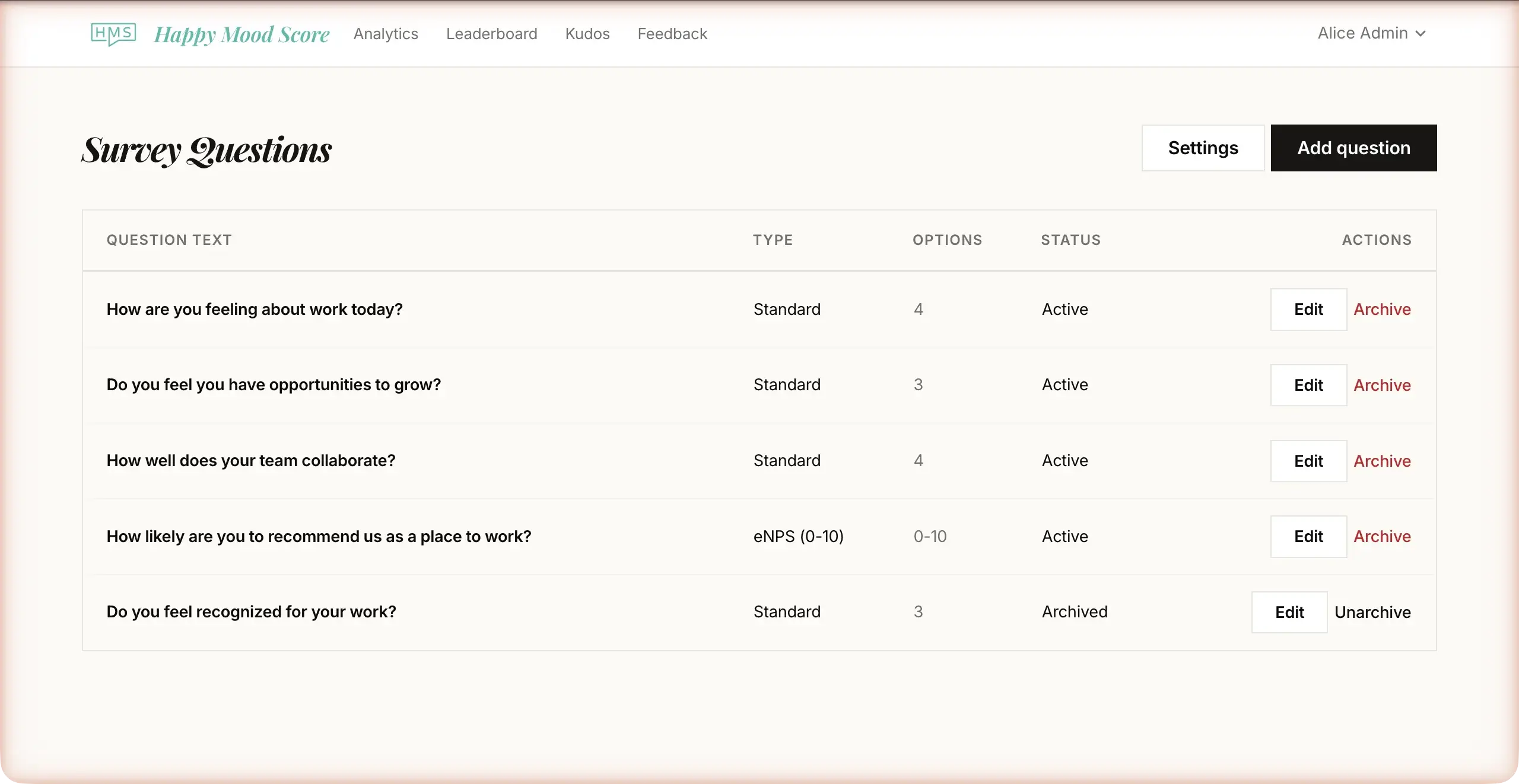

One to three. Seriously. If you're just getting started, one is plenty. Rotate through different topics each week -- satisfaction, workload, growth, belonging, manager support -- and over a few weeks you get a full picture without exhausting anyone.

Here's the math that matters: a one-question survey with 95% response rate tells you more than a five-question survey with 60% response rate. Keep it short.

How to send them

Email works best. It's universal, everyone checks it, and the survey should be answerable right there in the email -- one click, no login required. The moment people have to open a separate app and sign in somewhere, half of them won't bother.

Picking the right questions

You don't need to invent these from scratch. There are proven questions for the dimensions that actually matter. Here are the five areas to rotate through:

Satisfaction. "How satisfied are you with your work this week?" -- The baseline. Are people generally happy or not?

Growth. "Do you feel like you're learning and growing in your role?" -- This one catches stagnation before it turns into a resignation letter.

Manager support. "How supported do you feel by your direct manager?" -- Uncomfortable to ask, incredibly useful to know. Most people don't leave companies, they leave managers. You've heard that before because it's true.

Belonging. "Do you feel like you belong on this team?" -- Especially important after rapid hiring. New people need time to feel like they're part of something, and this tells you whether that's happening.

Workload. "How manageable is your workload right now?" -- Burnout doesn't announce itself. By the time someone says "I'm burned out," they've been struggling for weeks. This catches it early.

One question per week, rotating through these five. After five weeks, you have a complete picture. Then you do it again, and now you have trends. That's when it gets really useful.

The anonymity thing matters more than you think

Let's be honest: if people think their boss can see what they said, they're going to say everything's fine. Every time. And then your data is useless -- or worse, it makes you think things are great when they're not.

Anonymity isn't a nice-to-have. It's the foundation. Here's what that actually means:

Nobody sees individual responses. Not the team lead, not the CEO. Results show up as team averages and trends. Never "Maria gave a 2 out of 5."

Small teams get extra protection. If only two people on a five-person team respond, showing the average basically reveals who said what. Good tools suppress results until enough people have responded -- usually four or five minimum. This is called a confidentiality threshold, and it matters a lot.

The tracking is separated. It's fine to know who hasn't responded yet (so you can send a reminder). It's not fine to connect a specific response to a specific person. These should be two different things.

You have to say all this out loud. Tell your team how anonymity works. Show them the thresholds. Explain what you'll see and what you won't. People don't trust surveys by default -- especially if they've been burned before. You earn trust by being transparent about the mechanics.

If you're currently doing this on Google Forms, this is probably where it breaks down. Even with email collection turned off, people know Google Forms can track them. There's no way to prove it doesn't. That skepticism is enough to tank your response quality. It's one of the biggest reasons teams move to something purpose-built.

What to do when you get the results (the part most people skip)

Here's where it all falls apart for most teams. They send the surveys, people respond, the data sits in a dashboard, nobody looks at it, people stop responding, and the whole thing dies in two months. Sound familiar?

The data is only useful if you do something with it. If you're not going to act on what people tell you, genuinely, don't ask. It's worse than not surveying at all because you've now promised people that their voice matters and then proved it doesn't.

Here's what works:

Give it a day. When the results come in, don't react immediately. Your first instinct is to explain away a low score or to fix everything at once. Sleep on it.

Pick one thing. You don't need to address every data point. Find the one trend that's most actionable right now. Manager support dropped on the engineering team? Workload scores are in the red for the third week? Focus there.

Share what you found. This is the move that changes everything. Tell the team what the data showed. "Workload scores have been dropping for two weeks, especially on the product side. I want to talk about that." That's it. That single act of transparency is what convinces people their answers actually go somewhere.

Commit to one thing. Not a ten-point action plan. One thing. "I'm going to look at sprint scope with the product team this week." Say it publicly.

Check next week. Did the scores move? If yes, tell people. If not, try something else. The point is the loop: ask, listen, act, check. Repeat.

When people see this loop working -- when they answer a survey, something changes, and then they're asked again -- response rates go up and the data gets more honest. That's when pulse surveys stop being a checkbox and start being genuinely useful.

The mistakes that kill this

You can predict exactly how pulse surveys fail. It's the same patterns every time:

Collecting feedback and doing nothing with it. People will fill out your first survey gladly. They won't fill out the fifth one if nothing changed after the first four. This is the number one killer.

Too many questions. Every question past three costs you responses. If your survey takes more than a minute, it's too long. People have work to do.

Too infrequent. Once a quarter isn't a pulse survey, it's just a short annual survey. The whole point is the trend -- and you need weekly or biweekly data to see one.

Making it feel like a mandate. The moment surveys feel like something management is imposing on people, the quality of the data collapses. People click 3 on everything just to make it go away. It has to feel easy and optional, even if you'd really prefer 100% participation.

Not taking anonymity seriously. If one person suspects their response was traced back to them, the word spreads. Trust takes months to build and one incident to destroy.

Quoting open-text responses carelessly. If someone writes a specific comment on a small team, everyone can guess who said it, even without a name attached. Summarize themes, don't share direct quotes.

Getting started this week

This doesn't need to be a big project. The whole point of pulse surveys is that they start small. Here's what you can do this week:

1. Pick a tool. If your team is under 20 people, Happy Mood Score has a free tier that covers everything -- surveys, anonymity, scores, trends. Over 20, it's 15 euros a month flat, not per person. You could also use Google Forms, but you'll hit its limits fast.

2. Write one question. Literally one. "On a scale of 1 to 5, how satisfied are you with your work this week?" That's enough. You can get fancy later.

3. Send it Monday. Pick a day and stick with it. Monday works well -- people are setting up their week and the data's ready by mid-week when you can still act on it.

4. Check responses Wednesday. Below 70%? Send one short reminder. Don't nag.

5. Share the results Thursday. Even if they're boring. "Our satisfaction score this week was 4.1 out of 5. Thanks for responding." That's the whole thing. You've started the loop.

Next week, try a different question. The week after, compare the scores. By the end of the month, you'll know more about how your team feels than most growing companies figure out in a year.

The hard part isn't the survey. It's committing to listen.