You inherited a practice you probably didn't choose. Annual engagement surveys are what "professional companies" do, so at some point you probably put one on the calendar, picked a tool, and sent a 50-question survey to your whole team. Maybe you got decent response rates. Maybe the results came back six weeks later and you weren't entirely sure what to do with them.

Here's the thing: the annual survey wasn't designed for you. It was designed for companies with dedicated HR analytics teams, stable org charts, and the luxury of moving slowly. That's not a startup growing from 30 to 60 people in 18 months.

The pulse survey vs. annual survey question isn't really about preference -- it's about what actually works for how you operate. This article breaks down exactly what each approach is built for, where each one breaks down, and how to think about the comparison if you're running a team between 20 and 100 people.

What annual surveys were actually designed for

Annual engagement surveys have legitimate origins. In the 1990s and early 2000s, large consulting firms and enterprise HR departments developed them as a way to benchmark large, relatively stable workforces. You'd survey 10,000 employees once a year, run the numbers against industry benchmarks, present findings to the board, and make structural changes over the following year.

That context matters. Annual surveys made sense when:

- Teams didn't change much year to year. Same people, same roles, same general structure.

- Surveys required significant manual work. Paper forms, data entry, statistical analysis. Doing it more than once a year wasn't practical.

- Results fed into long planning cycles. If your company plans two years out, a yearly data point is enough.

If any of that describes your company, stop reading and go enjoy your annual survey.

For everyone else: your team probably looks meaningfully different than it did six months ago. You've hired people, maybe lost a few, promoted someone, added a new team, changed your product direction. The conditions that made annual surveys sensible simply don't apply.

The feedback delay problem: 12 months is a lifetime at a startup

Imagine you have a thermometer that only tells you the temperature once a year.

You could use it to track whether winters are getting warmer over a decade. You couldn't use it to know whether to wear a coat today.

That's the core problem with annual surveys at a growing company. By the time the results reach you, the situation that produced them has already changed. The teammate who gave everything a 2 out of 5 in October either turned things around or left by January. The team morale dip that showed up in the data was caused by a rough product launch that's now three sprints in the past.

You can't act on stale data. Or rather, you can act, but you're treating a wound that's already healed or, worse, missing the new one that opened up while you were reading last year's report.

The numbers bear this out. A typical annual survey runs 50-plus questions and takes 20 to 30 minutes to complete. Results take weeks to compile and present. By the time you're looking at the findings in a slide deck, you're at least six to eight weeks out from when the data was collected -- at a company where significant things can happen in a single sprint.

At a startup, the question isn't just "how do our people feel?" It's "how do our people feel right now, so I can do something about it this week?" Annual surveys can't answer that second question. They were never built to.

What pulse surveys catch that annual surveys miss

A pulse survey is short (one to five questions), recurring (weekly or biweekly), and designed to generate fast, actionable data. Response rates run above 85% for short surveys -- meaningfully higher than the 76% average for longer annual surveys, a gap that's growing as survey fatigue sets in.

But the response rate gap isn't the main thing. The main thing is what you catch.

Early signals. Burnout doesn't appear overnight. Neither does a team morale problem, or a breakdown in trust between a team and their lead. These things build up over weeks. Pulse surveys catch them when they're still early-stage and fixable. Annual surveys catch them when they're already a retention problem.

Real-time change. Pulse surveys reflect your current team -- the people who are actually there this week, in their actual roles. When someone new joins, they start contributing to the trend data immediately. When someone's situation changes, the scores shift with it. You're always looking at a live picture.

Team-level visibility. Because you're surveying frequently, you can spot differences between teams. Engineering scores are holding steady while the product team has been trending down for three weeks. That's an operational signal you can actually do something about.

The compounding effect. Week one of a pulse survey tells you something. Week eight tells you something much more valuable -- you're watching a trend line, not reading a single data point. After two months, you have the kind of longitudinal insight that would take an annual survey two years to produce.

The academic framing for this distinction is that pulse surveys provide operational visibility while annual surveys provide strategic perspective. At a startup, you need to operate well first. The strategic perspective can come later.

When annual surveys still make sense (honest answer: probably not yet)

Let's be fair to the annual survey. There are scenarios where it earns its place:

For external benchmarking. Annual surveys often use standardized question sets that let you compare your scores against industry benchmarks. If you're trying to understand how your team's engagement compares to similar companies, a benchmarked annual instrument can be useful. That said, benchmarking is most valuable when your team is stable enough that the benchmark actually represents who you are.

For board or investor reporting. Some investors and boards want to see formal engagement data on a regular basis. If an annual engagement survey is a reporting requirement, that's a legitimate reason to run one.

For topics that don't fit a short format. Some questions genuinely need context, nuance, and space to answer. Compensation philosophy, long-term career trajectory, cultural alignment -- these don't compress well into a one-to-five scale. A longer annual survey gives people room to reflect and write.

Here's the honest answer on timing: if your team is under 100 people and you've been running for less than three or four years, you probably don't have the stability, the benchmarking need, or the reporting requirement that justifies a full annual survey as your primary listening mechanism. Build the pulse habit first. You can layer in an annual deep-dive once you have a baseline to compare against.

The hybrid approach: pulse surveys + one annual deep-dive

Here's where the two formats actually complement each other -- and it's worth being concrete about how.

Pulse surveys run weekly or biweekly throughout the year. Short, focused, fast. One to three questions per round. You're tracking satisfaction, workload, belonging, manager support, and growth on a rotating basis. This is your operational layer: it tells you what's happening right now and lets you respond quickly.

One annual survey goes deeper, once you have the pulse habit in place. Not 50 questions -- maybe 20 to 30. It covers things that need more space: compensation fairness, long-term growth trajectory, cultural alignment, confidence in leadership direction. This is your strategic layer: it informs bigger decisions about how you structure the team, what you invest in, and where you need to improve over the next year.

The combination works because the pulse surveys keep you responsive week to week, while the annual survey gives you a structured moment to zoom out. The pulse data also makes the annual survey more useful -- you're not starting from scratch, you're adding depth to a trend you've been watching all year.

The key word is "add," not "replace." A lot of teams start with an annual survey and think about adding pulse surveys as a supplement. The better sequence is the reverse: start with pulse surveys, get good at listening and acting on them, and then add an annual deep-dive when you're ready to go broader.

The comparison: pulse surveys vs. annual surveys

Here's how the two formats stack up across the dimensions that actually matter for a growing team:

| Pulse surveys | Annual surveys | |

|---|---|---|

| Length | 1–5 questions | 20–80+ questions |

| Time to complete | Under 2 minutes | 20–40 minutes |

| Frequency | Weekly or biweekly | Once a year |

| Average response rate | 85%+ | ~76% (and declining) |

| Time to results | Same week | Weeks after close |

| Data freshness | Current | 6–8 weeks old at delivery |

| Trend visibility | High (weekly data points) | Low (annual snapshots) |

| Actionability | High — act this week | Low — plan for next year |

| Setup effort | Low | Medium to high |

| Cost | Low to moderate | Moderate to high (often consultant-driven) |

| Best for | Operational visibility, fast feedback loops | Strategic benchmarking, deep qualitative data |

| Startup fit | Strong | Weak until team is stable |

The response rate gap deserves a second look, because it compounds over time. Start with a 76% response rate on your annual survey, and you're already missing a quarter of your team. As years pass and people learn that nothing changes after the survey, that number keeps dropping. Pulse surveys start higher and trend up when people see their feedback actually acted on.

Getting your timing right

If you're deciding where to start, the decision is pretty straightforward.

Start with pulse surveys if: your team is under 100 people, you've never run regular surveys before, you want fast feedback loops, or you're worried about survey fatigue. This is most growing startups.

Consider adding an annual survey if: you've been running pulse surveys for at least six months, your team is stable enough that an annual snapshot would actually reflect who you are, you have a benchmarking or reporting need, or you want to go deeper on topics that pulse surveys don't cover well.

Avoid running only an annual survey if: your team is changing rapidly, you want to catch problems early, you need to act on data within weeks rather than months, or your team has been burned by surveys in the past and needs to rebuild trust through consistent, responsive feedback loops.

One practical note: if you're leaning toward the hybrid model, sequence it intentionally. Run pulse surveys for a full quarter before you add an annual survey. Get your team used to a feedback loop that actually closes -- where they answer questions and see things change. Then run the annual survey into that established culture of trust. You'll get better data and better buy-in.

Starting this week

You don't need a big project to start. Pick one question, send it Monday, share the result Thursday. That's the whole first week.

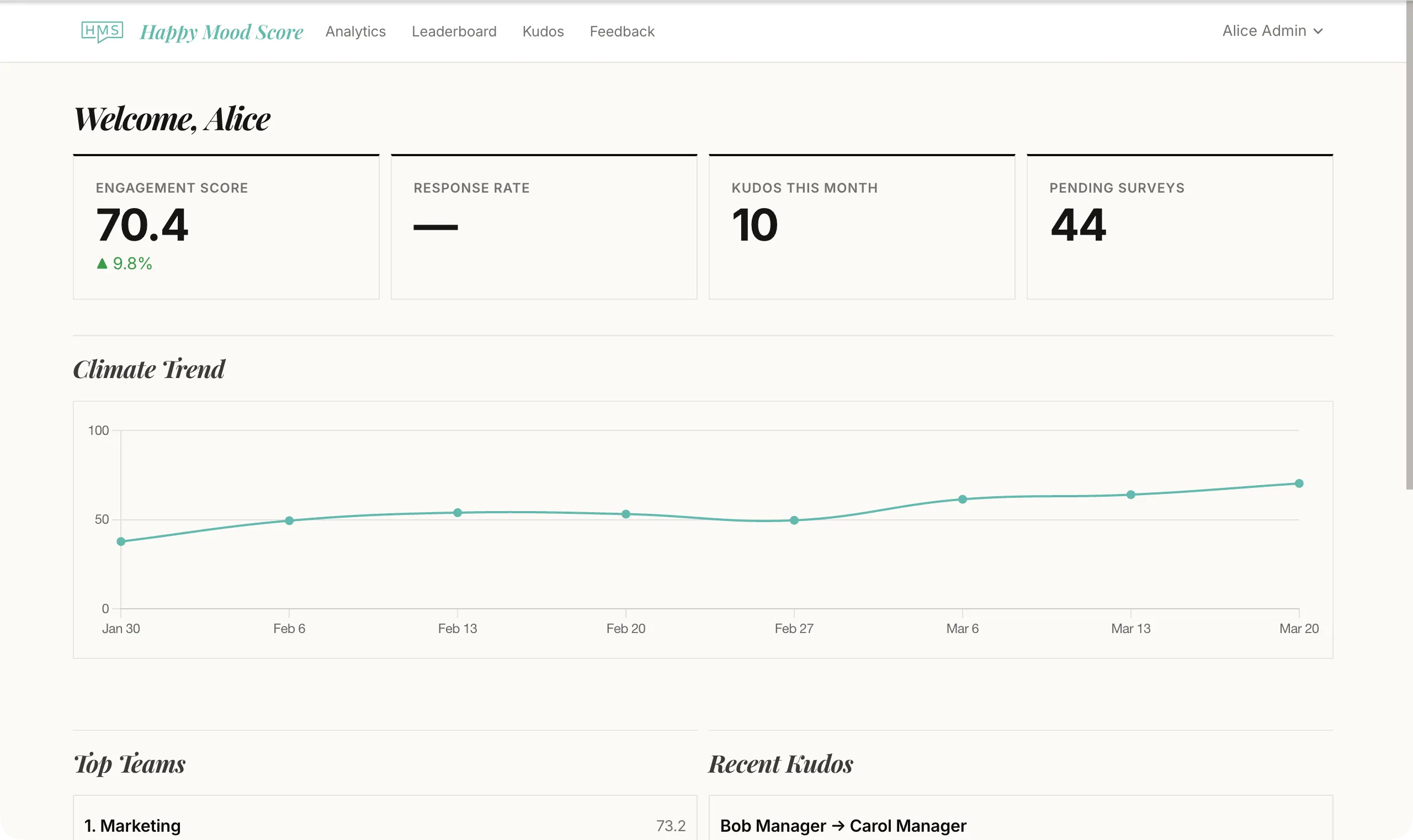

Tools like Happy Mood Score are built specifically for this kind of lightweight, recurring surveying -- short questions, anonymous responses, scores you can track over time without a spreadsheet or a consultant. But whatever tool you use, the most important thing is the habit: ask consistently, look at the results, do something about what you find, and tell your team what you did.

That loop -- ask, listen, act, repeat -- is what makes pulse surveys genuinely useful. It's also what makes your team trust that the next survey is worth their time.

Annual surveys can't run that loop at the speed a growing team needs. Pulse surveys can.