Everyone asks the same question when they start doing pulse surveys: how often should we send them? Weekly feels like a lot. Monthly feels safe. Quarterly feels... reasonable?

Here's a clear answer: for most teams between 20 and 100 people, weekly or biweekly is the right cadence. Not monthly. Definitely not quarterly. This article explains why, addresses the survey fatigue concern head-on, and helps you figure out where exactly on that spectrum your team falls.

The survey fatigue myth (and what actually causes it)

Let's get this out of the way first, because it's the reason most teams default to monthly or quarterly and then wonder why nobody's filling out their surveys anymore.

Survey fatigue is real. But it's not caused by frequency. It's caused by length and inaction.

Think about what actually burns people out on surveys. It's the 45-minute annual engagement assessment that shows up in October. It's the 20-question form that requires a login to a tool they've never heard of. It's the survey that asks the same questions year after year while nothing visibly changes. That's the experience people are reacting to when they say "we can't survey too often."

A one-question pulse survey that takes ten seconds to answer from your inbox? Nobody gets tired of that. You could send it every single day and people would keep answering -- as long as they believe their answers are going somewhere.

The research backs this up. Survey fatigue is consistently linked to two things: surveys that take 15 minutes or more, and teams that never see any action taken on results. Frequency isn't in the top five causes. Not even close.

So when someone on your leadership team says "we don't want to bug people too often," gently push back. The real question isn't how often you're asking. It's whether you're acting on what you hear.

Weekly vs. biweekly vs. monthly: what each one actually gets you

Here's how the three most common cadences play out in practice.

Weekly

Weekly is the gold standard for teams that are serious about staying close to how their employees feel. You send one question on Monday, most people answer by Wednesday, and you have fresh data to act on before the week is out.

The advantage is speed. If something goes sideways -- a re-org announcement, a difficult sprint, a manager conflict -- you'll see it in the scores within days, not weeks. That window to respond matters. The faster you catch a problem, the easier it is to fix.

The catch is commitment. Weekly surveys only work if someone is actually looking at the results every week and doing something with them. If results pile up in a dashboard nobody checks, you'd be better off going biweekly and doing it well.

One to two questions per survey, never more. The whole thing should be answerable in under a minute.

Biweekly

Biweekly is the right starting point for most teams. It gives you enough frequency to spot trends early, without requiring the same operational discipline as weekly. You still get 26 data points a year -- compared to 12 monthly or 4 quarterly -- which is plenty to build a meaningful trend line.

If you're unsure where to start, start here. You can always move to weekly once you've built the habit of actually reviewing and responding to results.

Biweekly also handles the natural rhythms of work better. If your team runs two-week sprints, syncing surveys to the end of each sprint can make the data more contextually useful. Low workload scores right before a deadline? That's expected. Consistently low scores across multiple sprints? That's a signal.

Monthly

Monthly is where things start to break down, especially at a growing company.

The problem isn't the number. It's the lag. A lot can change in four weeks. If someone is struggling in week one and you don't check in again until week five, you've missed the window to help. By the time you see the signal, the situation may have already resolved itself -- or it may have quietly gotten much worse.

Monthly also makes it harder to attribute causes. When you review your monthly results, you're looking back at 30 days of experiences, conversations, decisions, and events. Trying to figure out what drove a drop in satisfaction scores is like trying to remember what you ate that made you feel sick after eating three meals a day for a month.

Monthly is fine as a fallback if your team is strongly resistant to more frequent check-ins. But it's a floor, not a target.

Quarterly (and why it doesn't count)

Quarterly isn't really a pulse survey cadence. It's just a short annual survey you do four times. You get the same problems as annual surveys -- stale data, no trend visibility, too much happening between data points to make sense of anything -- with the added downside of people feeling like they just did this.

If someone proposes quarterly pulse surveys, what they actually want is a lighter version of an annual survey. That's a different product for a different problem.

How cadence changes as your team grows

There's a pattern here worth naming explicitly.

Under 30 people: You probably don't need to survey weekly yet. You still have enough visibility into how people are doing through direct contact. Biweekly is the right move -- it builds the habit without overwhelming you with data you don't yet have the infrastructure to act on.

30 to 60 people: This is the zone where things start slipping through the cracks. You can't feel the room anymore. Weekly or biweekly becomes important here, and weekly is worth the investment. You'll catch things that would otherwise surface as sudden resignations or team conflicts.

60 to 100 people: Weekly is the right answer. At this size, you have multiple teams, multiple managers, and multiple cultures forming within your company. The only way to stay close to what's happening is to check in frequently and make sure your team leads are doing the same at the team level.

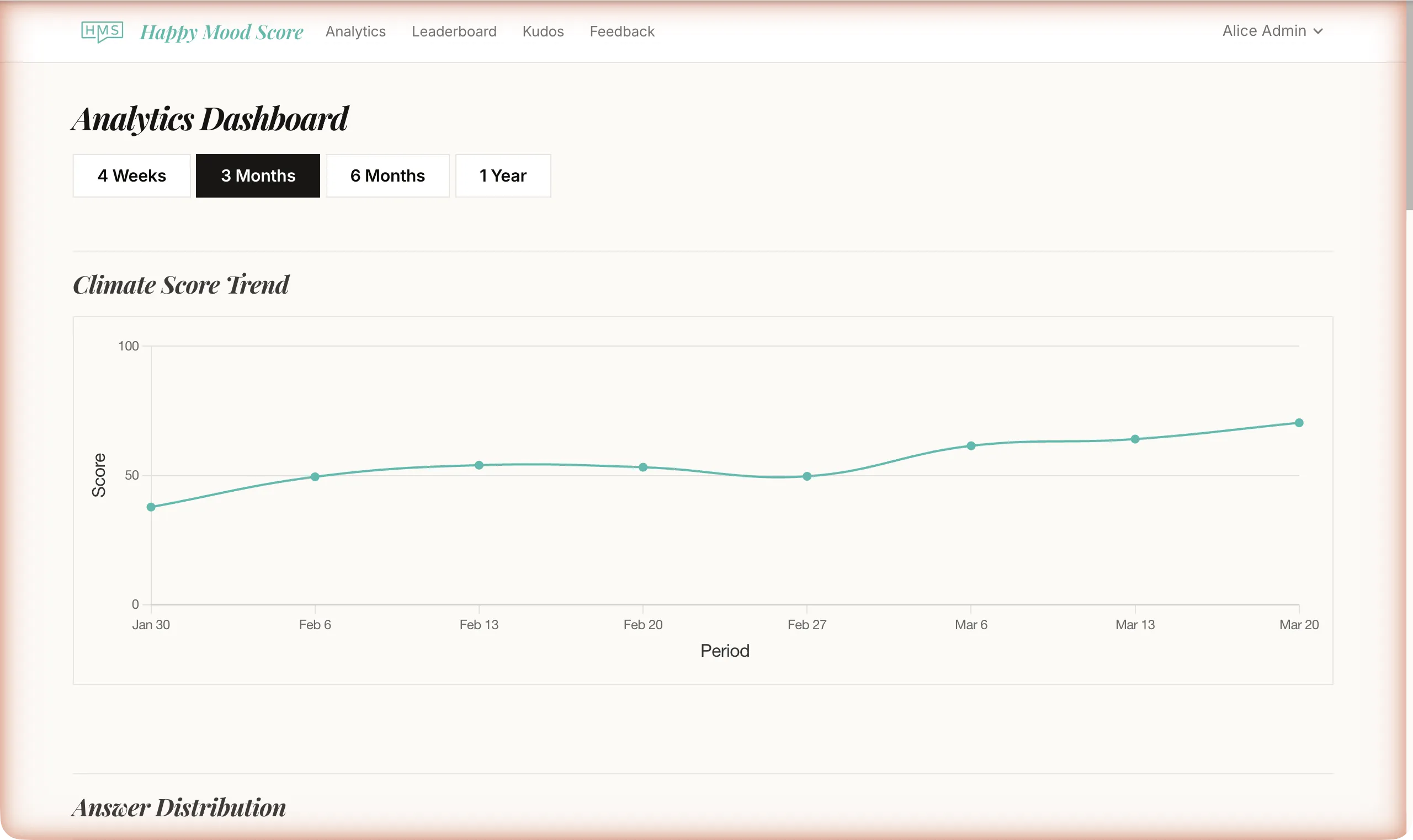

The other thing that changes as you grow: you can start breaking down results by team. A company-wide score is useful. A team-level score is actionable. When you can see that your engineering team is at a 3.2 and your product team is at a 4.4, you know exactly where to direct attention.

The real risk: surveying too infrequently

Here's the thing most people get backwards. The common worry is sending too many surveys and annoying people. The real risk is the opposite.

When you survey infrequently, you're reading about a team that no longer exists.

Your company six months ago had different people, different pressures, different relationships, and different challenges than your company today. If you're checking in every quarter, the data you're acting on is already ancient history. You're navigating by a map that was drawn of a different landscape.

The teams with the healthiest cultures tend to survey frequently, not despite caring about their employees, but because of it. They want to know quickly when something's off. They trust frequent, lightweight check-ins because they've proven they'll act on what they hear.

And here's the data point that should settle this: according to research from Lattice, 41% of companies using pulse surveys do them weekly, 35% do them biweekly, and only 24% do them monthly. The teams choosing monthly aren't more considerate of their employees' time. They're often just less committed to doing this consistently.

Response rates of 80 to 90% are achievable when two conditions are met: managers communicate what the surveys are for and what happens with the data, and they visibly act on results. That kind of response rate isn't about frequency -- it's about trust.

Setting expectations with your team before you launch

Before you send the first survey, have a short conversation with your team. This doesn't need to be a big announcement. A paragraph in Slack or two minutes at the next all-hands is enough. Here's what to cover:

Why you're doing this. Not "because we want to measure engagement." Something real: "We're growing fast and I want to make sure we catch problems before they get big. I'd rather hear something's off from a weekly survey than from a resignation letter."

What you'll do with the results. Be specific. "I'll review results every week. If something's trending down, I'll bring it up with the relevant team lead that week." People need to believe the data goes somewhere.

How anonymity works. Individual responses are never visible. Results are shown as team averages. Small teams have a confidentiality threshold -- results don't show until enough people have responded to protect anyone's identity.

How long it takes. "One question. Ten seconds. You answer it right in your email."

That conversation -- or that Slack message -- does more for your response rates than any reminder email or survey platform feature. When people understand why they're being asked and what happens after, they answer.

The last thing to set is your own expectation. Commit to reviewing results every single time. Not when it's convenient. Every time. If you send a survey and don't look at the results, you've told your team their answers don't matter. Don't do that.

Once you've built the review habit, consider sharing a short summary with your team each week. Something like: "Workload scores dipped a bit this week -- I'm following up with a few team leads. Satisfaction scores are holding steady at 4.2. Thanks for answering." That takes two minutes to write, and it does more for trust than almost anything else.

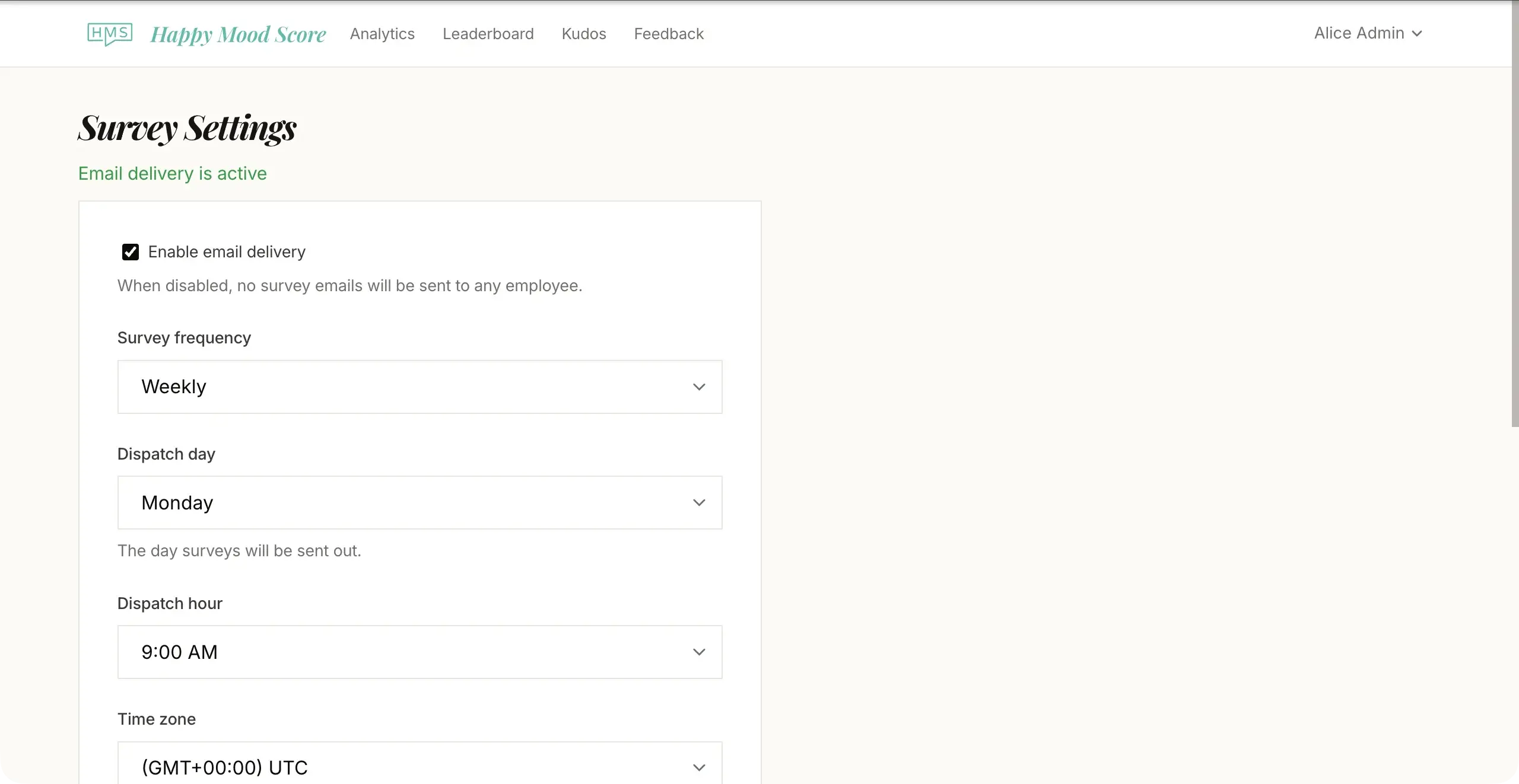

When you're ready to set this up, tools like Happy Mood Score are built specifically for this cadence -- short surveys, anonymous team-level results, and a simple dashboard for tracking trends over time.

Start this week

If you take one thing from this: don't let perfect be the enemy of useful.

Pick weekly or biweekly. Send one question. Review the results. Tell your team what you saw. Repeat.

You'll figure out your ideal cadence within the first month. And you'll know almost immediately whether you went too slow -- because by week four you'll be reading scores that feel disconnected from what you're actually seeing in the team, and you'll wish you'd checked in sooner.

The right frequency isn't the one that feels safest. It's the one that keeps you close enough to your team to actually help.