You launch an anonymous survey. You tell your team their responses are confidential. You promise nobody will see individual answers. And then you stare at a 40% response rate and wonder what went wrong.

Here's what went wrong: your team doesn't believe you.

Not because you're lying. Because they've been told surveys were "anonymous" before, and then someone got a pointed question from their manager about a comment that sounded suspiciously like something they wrote. Or because they work on a seven-person team and they know that "team averages" with seven people aren't very anonymous. Or because they've seen the admin panel of another tool and noticed it shows exactly who hasn't responded yet.

Trust in anonymous surveys isn't a switch you flip. It's something you build -- and at a small company where everyone knows everyone, the mechanics of building it are different from what you'll read in enterprise HR guides.

Why your team is skeptical (and why they're right to be)

Before you try to fix trust, it helps to understand exactly where it breaks down. These are the specific fears your team has, even if they'd never say them out loud:

"My manager can see what I said." This is the big one. If employees think their direct manager -- the person who decides their raise, their projects, their future at the company -- can trace a response back to them, they will either not respond or lie. Every time. It doesn't matter what the privacy policy says. What matters is what people believe.

"There are only eight of us. How anonymous can it be?" This is a legitimate concern, and most survey tools don't address it properly. If you have a five-person design team and three of them respond, showing the team average basically narrows things down. Add any demographic filter and you've identified someone.

"They track who hasn't responded. So they know who did." Some tools send reminders to people who haven't answered yet. Which means the system knows who answered and who didn't. And if the system knows, the admin probably knows too. That logic isn't wrong -- it's just that good tools separate participation tracking from response data.

"Last time I was honest, something changed -- and not in a good way." Maybe it wasn't even at this company. Maybe it was at a previous job. But one bad experience with a "confidential" survey is enough to make someone cautious for years. You're not just fighting your team's distrust. You're fighting every previous employer's broken promises too.

These aren't irrational fears. They're reasonable responses to how many survey tools actually work. Addressing them means going beyond "trust us, it's anonymous."

Anonymous vs. confidential: the difference your team needs to understand

These two words get used interchangeably, and they shouldn't. The distinction matters, and explaining it to your team is one of the fastest ways to build trust.

Anonymous means no identifying information is collected at all. Nobody -- not the tool, not the admin, not the CEO -- can connect a response to a person. The response exists, but there's no record of who submitted it.

Confidential means the system knows who responded, but that information is protected and not visible to anyone reviewing the results. The admin sees aggregated scores, not individual responses.

Most modern pulse survey tools are confidential, not truly anonymous -- and that's actually fine. Confidentiality lets the tool send reminders to non-responders, track response rates per team, and ensure nobody submits twice. True anonymity would break all of those features.

What matters is the separation. The part of the system that knows "Maria responded" is completely walled off from the part that knows "someone on the product team gave a 2 out of 5." Those two data points never connect, and no admin can make them connect.

When you explain this to your team, be specific: "The tool knows whether you've responded so it can send reminders. It does not know what you said. I cannot see individual answers. I see team averages." That's clearer and more honest than just saying "it's anonymous."

Confidentiality thresholds: the feature most people don't understand

This is the single most important trust mechanism in any survey tool, and most articles about anonymous surveys either skip it or mention it in one sentence.

A confidentiality threshold is a minimum number of responses required before results are shown for a group. If the threshold is set to five and only four people on a team responded, the results for that team are suppressed entirely. The admin sees nothing -- not the average, not the distribution, nothing. Just a message that says "not enough responses."

Here's why this matters so much at a small company.

Say you have a customer success team of six people. Without a threshold, showing the team average is already risky -- with six data points, a score that's dramatically different from the others is easy to attribute. "Well, five of us are happy, so the person who gave a 1 must be..." You can see where this goes.

With a threshold of five, results only show when at least five people responded. That's better. But the real protection comes from the math: when five out of six people respond, one outlier score blends into the average more naturally. You can see the team's mood. You can't identify the dissenter.

How to choose the right threshold:

- Teams of 5-10: Set the threshold at 4-5. This means results might occasionally be suppressed, but that's the right trade-off. Better to have no data than data that compromises someone.

- Teams of 10-20: A threshold of 5 works well. You'll almost always hit it, and the aggregation protects individuals effectively.

- Company-wide results: The threshold matters less at the company level (30+ people responding naturally protects anonymity), but keep it in place anyway. Consistency builds trust.

The key thing to communicate to your team: when a threshold isn't met, nobody sees anything. Not a partial result. Not a "we need more responses" note with a score attached. Nothing. That's the commitment.

Seven things that actually build survey trust

Trust isn't built by declaring surveys anonymous. It's built through specific, repeated actions that demonstrate you mean it. Here's what works:

1. Explain the mechanics before the first survey

Send a message to your team before the first survey goes out. Not a corporate announcement -- a real message. Cover three things: why you're doing this, how the data works, and what you'll never see.

Something like: "Starting next Monday, you'll get a short survey question by email once a week. It's one question, takes ten seconds. Your responses are confidential -- I see team averages, never individual answers. If fewer than five people on a team respond, I don't see that team's results at all. I'm doing this because we're growing fast and I want to catch problems before they become big ones."

That paragraph does more for trust than a ten-page privacy policy.

2. Show your team what the admin dashboard looks like

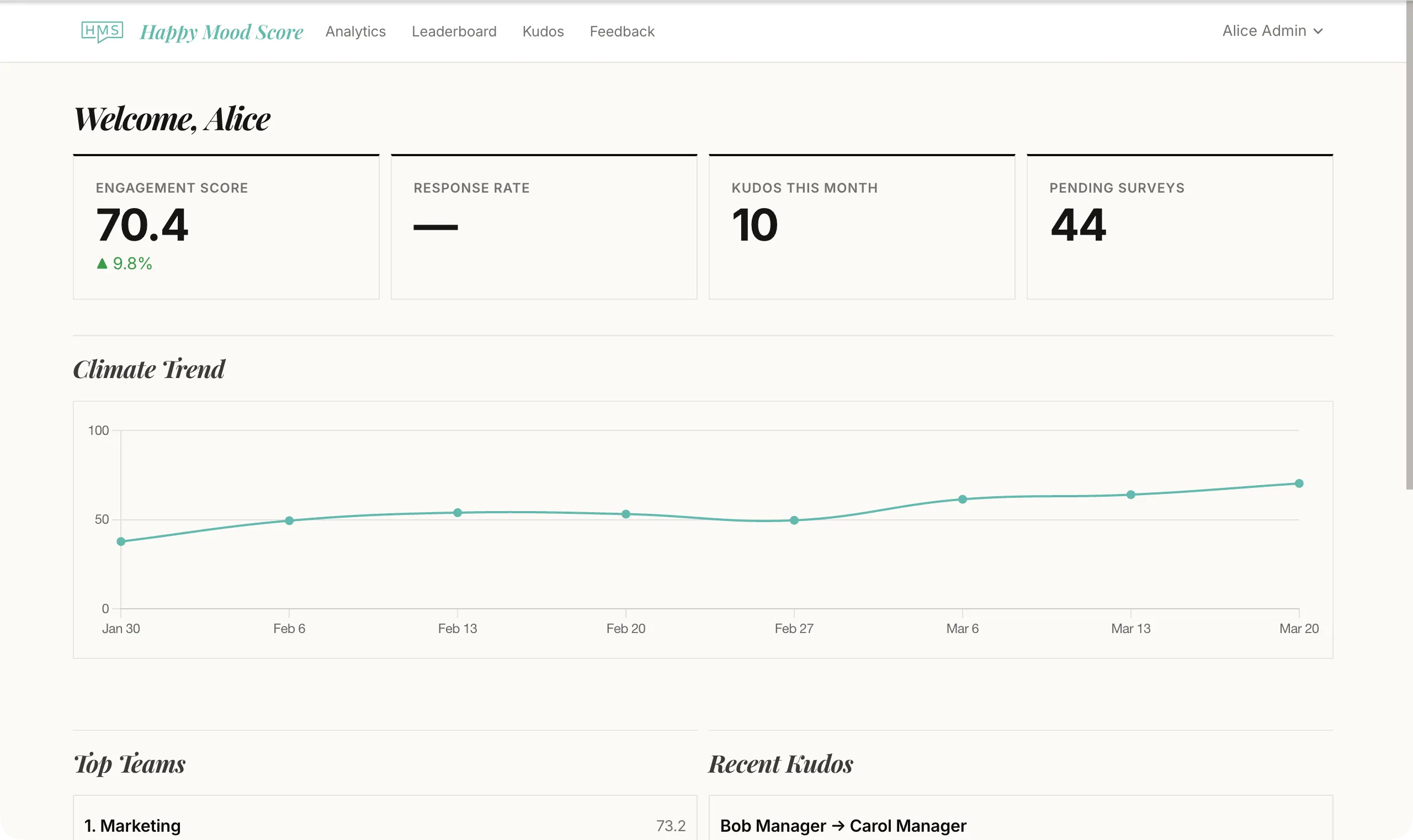

This is the move nobody makes, and it's the most powerful one. Share your screen for 60 seconds in a team meeting. Show them the dashboard. Let them see that it displays averages, trend lines, and team scores -- not names. Let them see the "not enough responses" message on a team that didn't hit the threshold.

When people can see with their own eyes that there's no "view individual responses" button, skepticism drops fast.

3. Never speculate about who said what

This should be obvious, but it needs saying. If a survey result comes back with a low score and you say in a meeting, "I bet that's because of the re-org -- a couple of people on the backend team seemed frustrated," you've just told everyone that you're trying to figure out who said what. Even if you weren't. Even if your intentions were good.

Talk about scores. Talk about trends. Talk about what you're going to do. Never talk about who might have given a specific answer. Ever.

4. Act on results visibly and quickly

Nothing builds survey trust faster than this. When people give honest feedback and then see something change, they learn that honesty is rewarded. When they give feedback and nothing happens, they learn that honesty is pointless.

You don't need to solve every problem the survey surfaces. You need to acknowledge what you saw and commit to one thing. "Workload scores dropped across the board this week. I'm going to review sprint scope with the team leads before next Monday." That's enough. The commitment is the point.

5. Share the results back with the team

Don't keep survey data locked in a dashboard only you can see. Share it. A short weekly or biweekly summary: "Our satisfaction score this week was 3.9, down slightly from 4.1 last week. Workload scores are stable. Growth scores ticked up." Two sentences. Takes a minute to write.

When people see their own data reflected back to them, two things happen. First, they confirm the tool is actually working (their low score showed up in the average). Second, they see that someone is paying attention. Both are trust builders.

6. Don't chase 100% response rates

This one's counterintuitive. The moment you start tracking who hasn't responded and pressuring them to fill it out, you've undermined the whole premise. The survey is supposed to be voluntary. If people feel surveilled for not responding, the trust cost outweighs the data gain.

One gentle automated reminder is fine. Following up personally with "Hey, I noticed you haven't filled out the survey" is not. The line between a nudge and pressure is thin, and your team knows the difference.

Aim for 70-80%. That's a healthy, honest response rate. If you're below 50%, the problem isn't people forgetting. The problem is they don't trust the process yet.

7. Give it time -- and don't flinch at bad scores

The first survey will probably get cautious, middle-of-the-road answers. People are testing the water. By the third or fourth survey, if you've been sharing results and acting on them, the answers get more honest. The scores might actually go down -- not because things got worse, but because people feel safe enough to tell you the truth.

That's a good sign. A score that drops because people are being honest is infinitely more valuable than a high score built on politeness.

The founder's dilemma: surveying a team you built

If you're a founder or CEO running surveys at a company with 20 to 40 people, there's a specific trust problem that nobody talks about: you're the one being evaluated.

When the leadership question asks "Does leadership communicate openly?" or the satisfaction question dips after a strategic decision you made, your team knows the results go to you. And they know that you'll know the feedback is about you. Even with perfect anonymity mechanics, this creates hesitation.

A few things help:

Acknowledge it. "I know some of these questions are about how I'm doing as a leader. I genuinely want honest answers. A 2 out of 5 is more useful to me than a polite 4." Saying this out loud -- and meaning it -- gives people permission to be honest.

Don't review results alone. If you have a co-founder or a people ops hire, review together. It's harder to take low scores personally when someone else is looking at the same data and helping you interpret it.

Respond to low scores with curiosity, not defensiveness. The first time leadership scores drop, what you do next defines the entire survey program. If you get quiet, people notice. If you get defensive, people notice more. If you say "Leadership scores dropped this week -- I'd like to understand what's behind that," you've just proved that honest feedback is safe.

A note on GDPR and employee surveys in Europe

If your team is in Europe -- and if you're reading this, there's a good chance it is -- GDPR adds a layer that actually works in your favor when building trust.

Under GDPR, collecting employee feedback is processing personal data, even when responses are confidential. That means you need a lawful basis (legitimate interest is the most common for pulse surveys), you need to tell employees what data you collect and how it's processed, and you need to keep that data secure.

Here's the trust opportunity: GDPR compliance isn't just a legal checkbox. It's a credibility signal. When you can tell your team "this tool is GDPR compliant, your data is stored in Europe, and here's exactly what's collected and what isn't," you're giving them a concrete reason to trust the process. It's not just "trust me" -- it's "trust me, and here's the legal framework that enforces it."

A few practical things to get right:

- Data minimization: Only collect what you need. A pulse survey needs a response and a team identifier for aggregation. It doesn't need demographic data, device fingerprints, or location.

- Right of access: Employees can ask what data you hold about them. With a properly confidential survey tool, the answer is simple: participation records (did they respond), but not response content (what they said).

- Data residency: Where is the survey data stored? If it's a US-based tool with servers in Virginia, that's a different conversation under GDPR than a European tool with European hosting. Know the answer before someone asks.

If you're in Germany and have a works council (Betriebsrat), loop them in before launching surveys. Works councils have co-determination rights on employee monitoring, and pulse surveys can fall into that category. Getting their buy-in early prevents problems later and signals to employees that this was done properly.

The trust arc: why the first survey is the hardest

Trust in anonymous surveys follows a predictable arc. Knowing this helps you not panic when the first few rounds feel underwhelming.

Survey 1: Low-to-moderate response rate. Cautious, non-committal answers. Scores cluster around 3-4 out of 5. People are watching to see what happens. This is normal.

Surveys 2-4: Response rates climb as people see that (a) it only takes ten seconds and (b) someone is actually reading the results. Scores might stay cautious, but you'll start to see more variation. That variation is honesty emerging.

Surveys 5-8: This is where it gets real. If you've been sharing results and acting on feedback, response rates hit 70-80% and the data gets genuinely useful. You'll see team-level differences. You'll see scores respond to real events. Scores might dip -- not because morale dropped, but because people stopped sugar-coating.

Month 3+: Survey responses become part of the team's rhythm. People expect the Monday email. They notice when something changes in response to their feedback. Trust is now self-reinforcing.

The entire arc takes about two months of consistent surveying, sharing, and acting. Two months. That's the investment. And the return is a team that tells you the truth before problems become crises.

Start building trust this week

You don't need a perfect system. You need a credible one. And credibility starts with a few simple things:

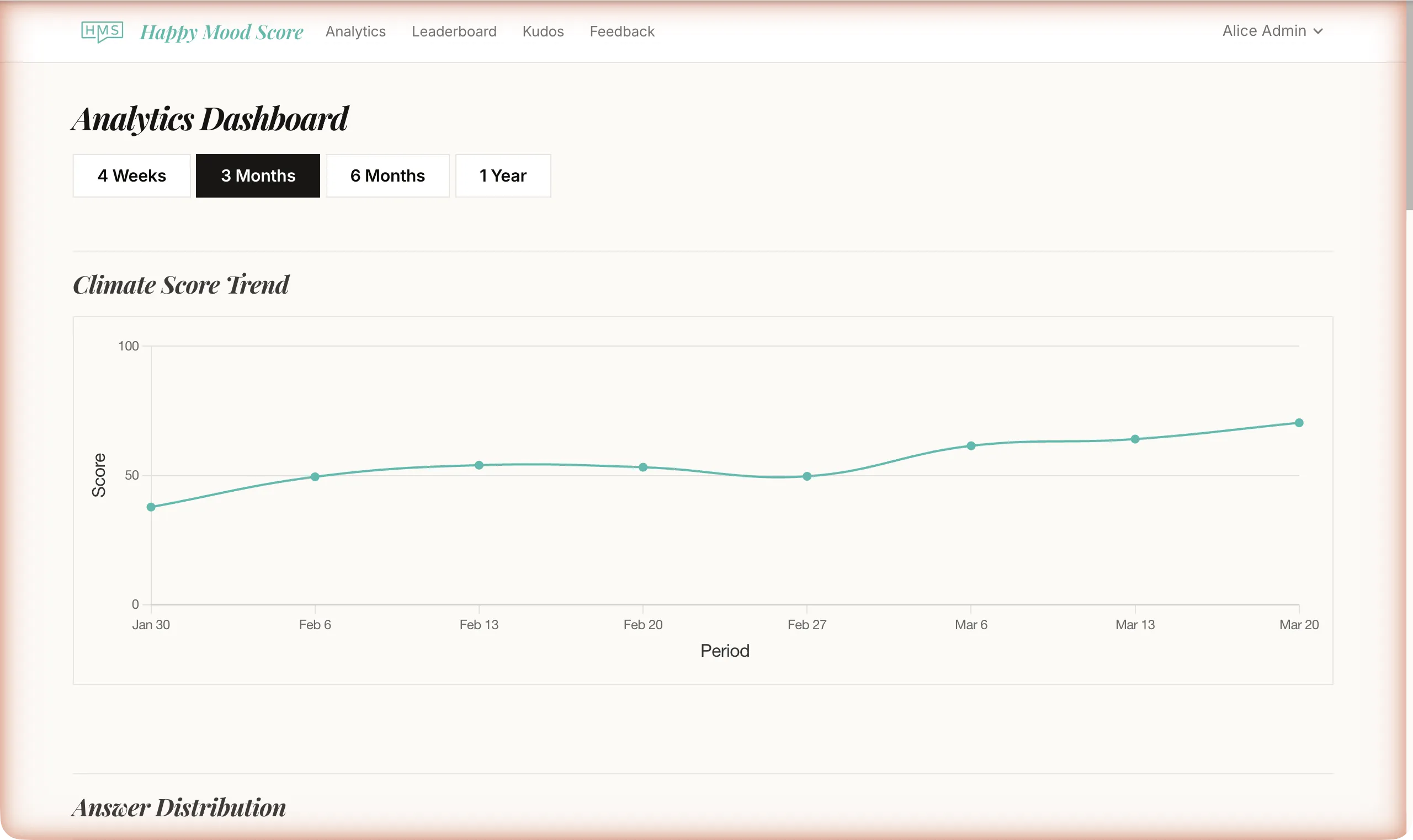

1. Choose a tool with real confidentiality thresholds -- not one that just promises anonymity in its marketing copy. Happy Mood Score suppresses team results below the threshold, separates participation tracking from response data, and stores everything in Europe. It's free for up to 20 people.

2. Send that message to your team. Tell them why, tell them how the data works, and tell them what you'll never see. Be specific.

3. Run the first survey and share the results. Even if the data is boring. Even if the response rate is low. The act of sharing is the first deposit in the trust account.

The tool matters less than the behavior. But the right tool makes the right behavior easier. And the right behavior, repeated over a few weeks, turns skepticism into honesty -- which is the only thing that makes employee surveys worth running at all.